Experts at a major cybersecurity conference warn that agentic agentic AI enables faster, adaptive cyberattacks, prompting calls for stronger automated defenses.

At the recent RSA Conference 2026 in San Francisco, experts discussed how AI is rapidly changing the cyber threat landscape, with new agentic tools giving attackers a speed and scale advantage that traditional defenses may struggle to match.

A leaked Anthropic blog post describes the firm’s unreleased Mythos model as a major leap in capability, warning that it could help automate vulnerability discovery and exploitation in ways that make large-scale attacks harder to stop.

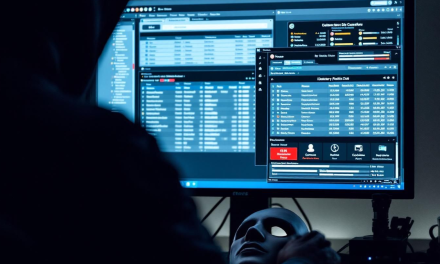

Security leaders cited in the story said the shift is not just theoretical, arguing that AI tools can continuously scan systems, test weaknesses, and adapt faster than human hackers, effectively compressing tasks that once took teams of specialists into a far shorter timeframe. One executive has even described the rise of agentic attackers as a watershed moment for cybersecurity, reflecting growing concern that machine-driven intrusions could overwhelm conventional incident-response workflows.

Recent examples illustrate the risk. In one case, a Russian-speaking hacker reportedly used multiple AI tools, including Claude and DeepSeek, to compromise hundreds of devices across dozens of countries, showing how even modest skill can be amplified by automation. Another alleged campaign involving Mexican government targets underscored fears that AI-assisted attacks are already moving beyond proof-of-concept demonstrations.

The problem is not limited to a single vendor or model. OpenAI has also warned that future systems could pose major cyber risks, suggesting that the issue is broadening as frontier AI models become more capable and easier to misuse. That has pushed defenders to rethink whether existing controls can keep pace with attacks that learn, iterate, and scale almost automatically.

At the same time, experts say AI is not exclusively an offensive force. Organizations are using it to improve monitoring, accelerate patching, and triage alerts more efficiently, potentially strengthening defenses if humans remain in control of decisions. However, the central warning remains clear: attackers need only one path in, while defenders must protect every exposed scrface.

This watershed moment could form the turning point for governments, enterprises, and critical infrastructure operators. As AI lowers the barrier to complex cybercrime, security teams may need faster automation, stronger guardrails, and more rigorous human oversight to avoid losing ground to machine-assisted adversaries.