Skyrocketing AI integration can expose serious gaps in data protection, operational resilience, and responsible use — requiring shared accountability and continuous oversight.

As organizations race to scale AI, security is not keeping pace. From decision-making to operations, AI is increasingly widespread, yet only a fraction of organizations has robust measures to assess and secure their AI systems.

The World Economic Forum (WEF) has reported that 66% of organizations polled in 2024 had expected AI to significantly impact cybersecurity, with 37% actually having processes to assess the security of AI tools before deployment in 2025.

Meanwhile, AI development and adoption have leapt ahead, with the latest autonomous and self-learning agents set to scale automation (and therefore risks) to new heights.

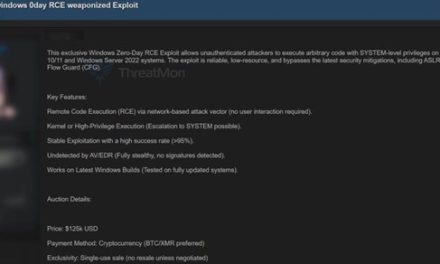

Cybercriminals benefit from AI too

Threat actors are actively targeting AI through model evasion, data poisoning and privacy attacks.

Their tactics, powered by AI, can mislead models, corrupt training data, extract sensitive information or repurpose models for harmful misuse.

Even a tiny fraction of poisoned training data (less than 0.1%) can cause misclassification, undermining trust and leading to faulty outputs that impact operations.

- AI providers (those who develop and train models) must embed security during design and development.

- AI users (organizations deploying and operating AI) must ensure robust safeguards during implementation and ongoing use.

- Partners and regulators play critical roles in setting standards and ensuring compliance.

Does this mean slowing down AI adoption? Absolutely not, but it does mean taking deliberate steps to protect AI.

Just as organizations safeguard intellectual property and operational resilience, securing AI should be integral to maintaining competitive advantage. AI security must be built into the initial phases of model development and deployment, much like cybersecurity in traditional IT systems. If your organization has yet to put in place an AI security framework, now is the time.

Three critical areas of AI security

As a foundation for AI-specific safeguards, core cybersecurity practices such as vulnerability management, anomaly detection and data protection are what organizations can build on and scale up.

While existing controls remain relevant, AI introduces unique risks that require additional capabilities.

For organizations deploying and operating AI systems, three key areas demand attention:

- Protect data integrity

Training data is the lifeblood of AI. Verifying authenticity and integrity is critical to prevent tampering and ensure models behave as intended. AI models and agents must be tested for vulnerabilities. For AI users, this means benchmarking resilience and understanding your organization’s security posture. Risks differ depending on how AI is adopted. Organizations developing their own models must manage data quality, model transparency and internal access controls, while those relying on third‑party systems must ensure vendors uphold strong security and privacy standards. - Ensure operational continuity

As more processes and solutions rely on AI, any disruption can cause service outages. Maintain functionality and accessibility through continuous monitoring, performance measurement and traceability across the AI stack. When errors occur, being able to pinpoint the part of the system at fault enables faster recovery and continuity. - Prevent abuse and ensure safe use

systems embedded in operations requires continuous oversight. Mechanisms to detect harmful behavior, correct appropriate model outputs and enforce accountability are essential. Pair cybersecurity controls with governance and ethics frameworks to embed responsible use through operations. Develop a progressive AI Governance Framework to formalize principles, roles and escalation — supported by risk classification, control checklists and training — to build workforce readiness.

Is that all it takes? No…

The three critical steps outlined above can lay a strong foundation, but more safeguards will be needed as AI adoption matures.

That is why securing AI across its lifecycle, from development to deployment, is fast becoming a priority.

Organizations that act early will protect their operations, maintain trust (through collective responsible AI), and unlock AI’s full potential as a driver of growth.

The common, enduring goal is clear: collaborate to innovate confidently, operate securely and stay ahead in an increasingly intelligent and interconnected world.