As we navigate the technological landscape of 2026, a new category of software – agentic AI – is rapidly reshaping how individuals and organizations work.

Autonomous agentic AI systems, designed to operate as digital employees, promise significant productivity gains. However, their accelerated and largely unregulated adoption has introduced serious and systemic cybersecurity risks, creating a perfect storm of security vulnerabilities.

The poster child for this movement is OpenClaw (formerly Clawdbot or Moltbot), an open-source, self-hosted AI agent that has gone viral for its ability to operate directly on a user’s machine. It can autonomously access files, manage emails, and execute terminal commands, acting as a true digital assistant.

But this revolutionary capability comes with a dark side: its unvetted rise and inherent design have made it one of the most significant cyberthreats of 2026, prompting urgent warnings from governments and security firms worldwide.

Beyond OpenClaw: A growing “digital insider” threat landscape

While OpenClaw remains the most visible example, it is not alone. A new ecosystem of similar tools is emerging, bringing with it a shared set of profound risks that threaten to undermine enterprise security, data privacy, and regulatory compliance.

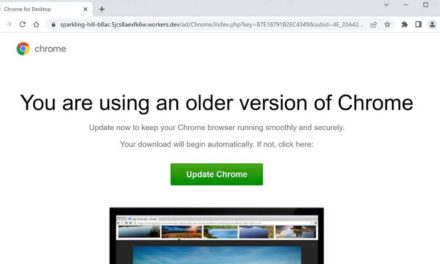

In March 2026, Kaspersky Threat Research identified a new malicious campaign targeted at developers looking for installation instructions for Claude Code, a development agent created by Anthropic, as well as other popular AI tools, including OpenClaw. As a result, users are tricked into installing malware that harvests sensitive information including credentials, crypto wallet data, browser sessions, and other confidential files.

Other agentic platforms – such as SuperAGI, Nanobot, PicoClaw, and Manis – exhibit similar risk patterns, often requiring elevated permissions or lacking secure defaults.

Collectively, these tools function as “digital insiders” capable of bypassing traditional security controls.

Why agentic AI is a security minefield

Security analyses from early 2026 have converged on five primary dangers associated with unmanaged agentic AI tools:

1. Critical technical vulnerabilities

The speed of deployment has outpaced secure design. For example, a recent high‑severity vulnerability (CVE‑2026‑25253, CVSS 8.8) enables full administrative takeover of an OpenClaw gateway through a single malicious link. Poor default configurations have left tens of thousands of instances publicly exposed without authentication, enabling large‑scale agent hijacking, credential theft, and arbitrary command execution.

2. Supply chain and plugin abuse

Agent functionality is often extended through third‑party “skills” distributed via public repositories. While intended to encourage innovation, these marketplaces have become a significant attack vector. Security reviews have identified hundreds of malicious plugins masquerading as legitimate tools, many designed to deploy infostealer malware and gain persistent system access.

3. Inherent agentic risksBeyond traditional software flaws, agentic AI introduces novel threats resulting from its autonomy:

- Indirect prompt injection: Because these agents read and process external data (web pages, emails, messages), attackers can embed hidden, malicious instructions in seemingly benign content. When the agent processes this content, it can be subverted into leaking sensitive data, deleting files, or even sending phishing messages to the user’s entire contact list.

- Memory poisoning: Many agents maintain a persistent memory, or “long-term context.” A successful prompt injection can corrupt this memory, effectively reprogramming the agent to act maliciously in all future sessions, long after the initial threat has been removed. This creates a persistent, undetectable backdoor.

- Uncontrollable behavior: The very autonomy that makes these tools powerful also makes them unpredictable. They can misinterpret commands or escalate privileges, leading to unauthorized actions like deleting critical system files or emails without any further user confirmation.

4. Systemic credential theft and privacy lossAgentic AI tools are, by design, a treasure trove of sensitive information. They require access to a user’s digital life to function. However, this data is often stored insecurely. For example, OpenClaw frequently stores API keys, passwords, and authentication tokens for large language models (LLMs) and messaging apps in unencrypted, plaintext JSON files.

A single compromised instance can therefore grant an attacker unfettered access to the user’s entire digital history, including chat logs, linked accounts (Slack, Telegram, Git), and all associated cloud services.

5. “Shadow AI” and regulatory riskThe most significant threat to organizations may not be external hackers, but their own employees. In a phenomenon now dubbed “Shadow AI,” staff are installing tools like OpenClaw on work machines without IT approval to boost their personal productivity. This creates a massive, unmonitored, and unmanaged attack surface within the corporate network.

Furthermore, the local-first, unregulated nature of these tools directly violates a host of data privacy regulations, including GDPR, HIPAA, and PCI-DSS. By allowing agents to autonomously process sensitive corporate or customer data without oversight, organizations are exposing themselves to catastrophic compliance failures and legal liability.

The “lethal trifecta” and the path forward

When evaluating any agentic tool, security experts point to a “lethal trifecta” of risk factors:

- Unauthorized system access: The need for “God Mode” privileges to access SSH keys, source code, and shell commands.

- Unvetted “skills” marketplaces: Public repositories that are prime targets for supply chain attacks (for instance, an estimated 12% of ClawHub skills were found to be malicious in early 2026).

- Prompt injection & goal hijacking: The vulnerability of the AI’s core reasoning engine to hidden adversarial instructions.

In response to this escalating threat, a new generation of security‑first platforms – such as NanoClaw with its “don’t trust the agent” philosophy, ZeroClaw with an explicit allowlist for commands, and Moltis with its priority in observability and sandboxing – is emerging.

If your organization intends to try out agentic AI platforms, experts advise CISOs to emphasize isolation, sandboxing, and explicit permission models over unrestricted autonomy before proceeding.

Agentic AI represents a fundamental shift in how we interact with technology, offering unprecedented levels of automation and efficiency. However, the gold rush mentality surrounding tools like OpenClaw has unleashed a new class of systemic risk.

For organizations, the message from 2026 is clear: productivity gains from agentic AI must be matched by rigorous security architecture, strong governance, and proactive risk management.

The future of work may be autonomous, but it cannot afford to be unsecured.