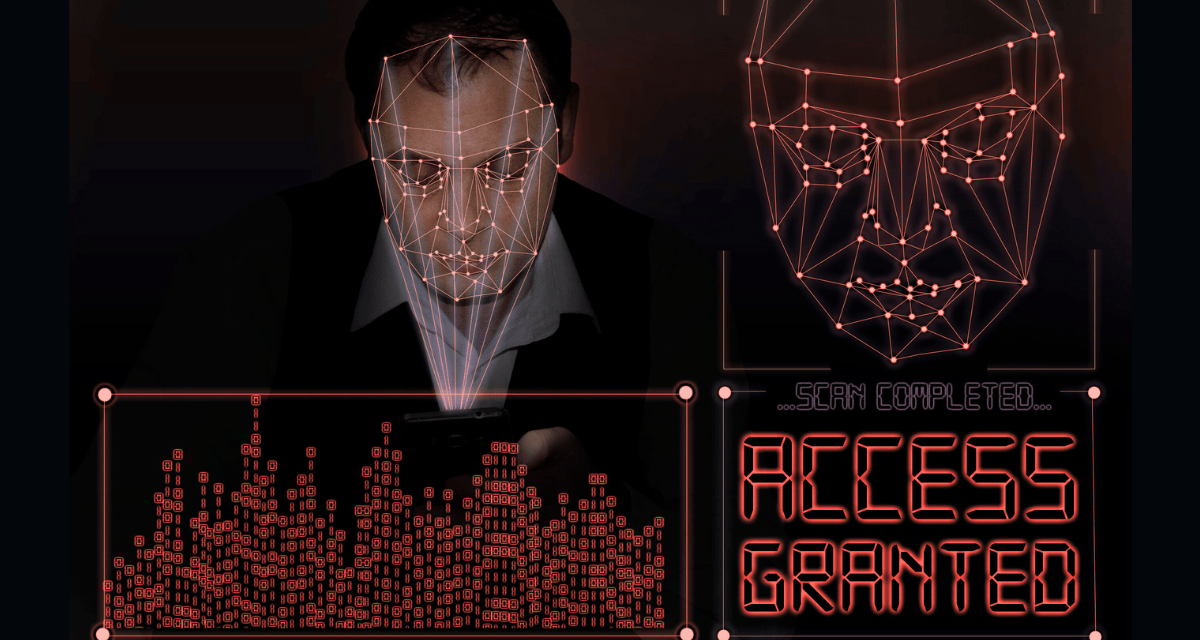

Chatter in Dark Web forums betray the frustrations of malicious actors who have not found a way to circumvent advanced biometrics.

Dark Web marketplaces, often dubbed fraud superstores, are expanding rapidly to supply tools for bypassing modern anti-fraud systems. These illicit hubs offer everything from KYC-ready bank accounts to beginner tutorials and plug-and-play kits, fueling a surge in amateur cybercriminals.

Yet, beneath the surface, fraudsters have been hitting a wall: advanced AI-driven deepfake detection technologies that they openly admit cannot be circumvented.

According to one data analytics firm, forum discussions on the Dark Web have revealed mounting frustration among users attempting deepfake scams. Criminals have been venting about detection systems that analyze real-time biometric signals, such as blood flow and micro muscle movements in video feeds.

These checks expose fakes — even elaborate ones using latex masks — by identifying physiological anomalies invisible to the naked eye. One anonymous post sums it up starkly: “There is no bypass.” This marks a shift in the cat-and-mouse game, where defensive AI evolves faster than offensive tactics.

Fraudsters that used to rely on stolen credentials or pre-verified accounts now struggle, as these systems cross-reference live data in milliseconds. This forces reliance on riskier workarounds, such as sourcing “fraud-ready” devices that mimic legitimate behavior — but these still falter under scrutiny.

Marketplace instability adds pressure

Ironically, the Dark Web offers no refuge. Exit scams plague these platforms, where operators vanish with users’ funds, prompting self-policing measures such as user bans and item restrictions.

In response, some fraud services migrate to mainstream social media for convenience, eroding the Dark Web’s anonymity edge. Regulators shutter sites routinely — only for more clones to emerge: but the core demand persists amid global scammer proliferation.

According to LexisNexis Risk Solutions, the firm sharing its own data findings* in a fraud report, this trend underscores AI’s dual-edged role in cybersecurity: empowering fraud while also enabling superior countermeasures.^

As amateurs flood the fraud scene using AI powered tools, prioritizing biometric verification has become essential for IT infrastructures. Banks and retailers adopting advanced liveness checks correctly should see deepfake attacks achieving reduced success rates.

*Data gleaned from its own financial services clients and proprietary surveys involving marketplace scans and forum analysis.

^Other data analyses for 2025 from cybersecurity firms have revealed varied lenses on the fraud landscape for 2025.