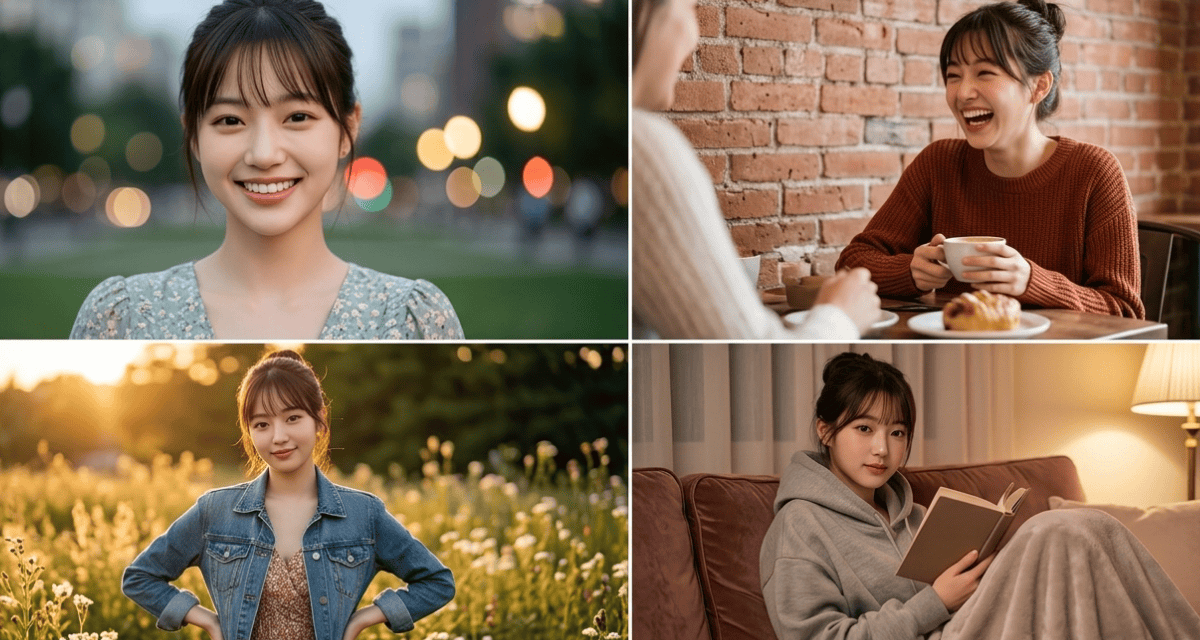

Analysis of 1,500+ AI-generated profiles reveals telling warning signs. Here are 3 red flags to look out for.

Recently, BranditScan released its February 2026 analysis of AI-generated profile detection in romance scams, reviewing 1,500+ flagged accounts to identify recurring image and conversation patterns.

The key takeaway: the most effective AI scams aren’t the obvious fakes. They’re the profiles that look “normal,” sound fluent, and are your exact match – until they ask for money or personal data.

Last year alone, Americans lost over $1.3B to online romance scams, with AI-generated profiles and automated messages targeting people looking for “the one.” Asia Pacific is not exempt either.

Such scam tactics can be applied using AI-generated identities or deepfakes in other areas, such as matching professional services or remote employees, and across all industries and geographical regions.

The BranditScan analysis of 1,500+ flagged profiles found that today’s AI profiles aren’t obvious bots with broken English or blurry photos. Instead, they are hyper-realistic, data-driven, and specifically engineered to match your preferences.

According to the report: “The profile doesn’t feel fake anymore. It mirrors your interests, uses your language, and replies within seconds. Victims assume all this is chemistry. In reality, it’s just an AI. By the time money or personal data is requested, you’re already emotionally invested.”

The red flags

Paying attention to some key red flags goes a long way to detecting AI-powered scams and fraud:

- Something’s off in their eyes

While AI has mastered skin texture, it still struggles with realistic lighting – especially in the eyes. Research confirms that 94% of AI-generated faces contain inconsistent light reflections. In real photos, both eyes should reflect the same light source, such as a window or phone screen. In AI-generated images, those reflections are often mismatched.

What users should know: Zoom in on the pupils. If one eye reflects a clear object and the other shows a distorted light source, the image may be AI-generated. - Their Wi-Fi “keeps glitching”

Among the fraud cases reviewed, 40% of scams involved deepfake video calls. In these cases, scammers used real-time face-swap filters to appear as someone else during the calls. Nearly 50–60% of victims said the video convinced them, despite subtle glitches like “clipping” or sudden lighting changes.

What users should know: If you are on a video call with them, ask them to turn their head fully to the side or wave their hand in front of their face. If their face blurs, flickers, or the hand “clips” through their cheek, hang up immediately. - They’re falling for you way too fast

In AI romance scams, the love-bombing is faster, more intense, and more consistent than anything seen in traditional scams. AI models are built to maximize engagement, often intensifying intimacy up to 300% faster than a real person would. In many cases, conversations shift from “stranger” to “soulmate” in under 100 messages – creating emotional attachment before skepticism has time to kick in. - What users should know: Test the relationship’s patience. If a simple boundary (“I can’t talk tonight”) triggers an instant, perfectly worded guilt trip, you’re likely reading a script. Real people hesitate and take time. Bots can reply in polished paragraphs, instantly.

The bottom line

“Most people think they’re looking for obvious warning signs, such as bad grammar, blurry photos, or strange behavior. But the most telling red flag in 2026 is perfection,” warned experts at BranditScan.

“If the pictures look flawless, the replies are instant, and the connection feels almost too good to be true, you’re likely not talking to a person. What feels like fate today can turn into a 6-figure loss by Friday.”